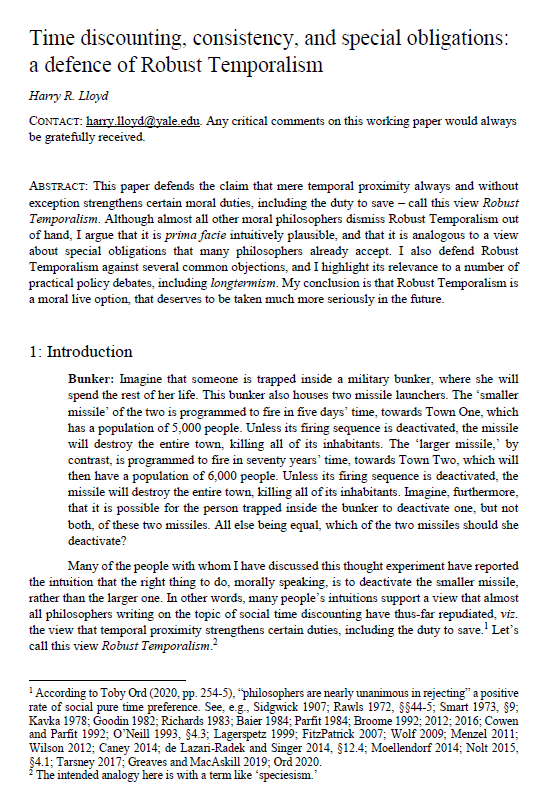

Time discounting, consistency and special obligations: a defence of Robust Temporalism

Harry R. Lloyd (Yale University)

GPI Working Paper No. 11-2021

This is the winning entry of the Essay Prize for global priorities research 2021. The uploaded paper is the full, revised draft of the abridged paper submitted for the prize competition.

This paper defends the claim that mere temporal proximity always and without exception strengthens certain moral duties, including the duty to save – call this view Robust Temporalism. Although almost all other moral philosophers dismiss Robust Temporalism out of hand, I argue that it is prima facie intuitively plausible, and that it is analogous to a view about special obligations that many philosophers already accept. I also defend Robust Temporalism against several common objections, and I highlight its relevance to a number of practical policy debates, including longtermism. My conclusion is that Robust Temporalism is a moral live option, that deserves to be taken much more seriously in the future.

Other working papers

Quadratic Funding with Incomplete Information – Luis M. V. Freitas (Global Priorities Institute, University of Oxford) and Wilfredo L. Maldonado (University of Sao Paulo)

Quadratic funding is a public good provision mechanism that satisfies desirable theoretical properties, such as efficiency under complete information, and has been gaining popularity in practical applications. We evaluate this mechanism in a setting of incomplete information regarding individual preferences, and show that this result only holds under knife-edge conditions. We also estimate the inefficiency of the mechanism in a variety of settings and show, in particular, that inefficiency increases…

Desire-Fulfilment and Consciousness – Andreas Mogensen (Global Priorities Institute, University of Oxford)

I show that there are good reasons to think that some individuals without any capacity for consciousness should be counted as welfare subjects, assuming that desire-fulfilment is a welfare good and that any individuals who can accrue welfare goods are welfare subjects. While other philosophers have argued for similar conclusions, I show that they have done so by relying on a simplistic understanding of the desire-fulfilment theory. My argument is intended to be sensitive to the complexities and nuances of contemporary…

Altruism in governance: Insights from randomized training – Sultan Mehmood, (New Economic School), Shaheen Naseer (Lahore School of Economics) and Daniel L. Chen (Toulouse School of Economics)

Randomizing different schools of thought in training altruism finds that training junior deputy ministers in the utility of empathy renders at least a 0.4 standard deviation increase in altruism. Treated ministers increased their perspective-taking: blood donations doubled, but only when blood banks requested their exact blood type. Perspective-taking in strategic dilemmas improved. Field measures such as orphanage visits and volunteering in impoverished schools also increased, as did their test scores in teamwork assessments…