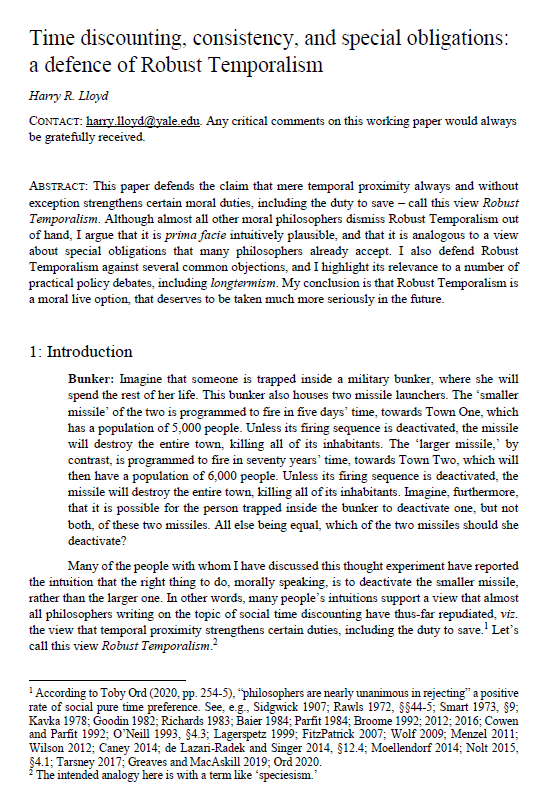

Time discounting, consistency and special obligations: a defence of Robust Temporalism

Harry R. Lloyd (Yale University)

GPI Working Paper No. 11-2021

This is the winning entry of the Essay Prize for global priorities research 2021. The uploaded paper is the full, revised draft of the abridged paper submitted for the prize competition.

This paper defends the claim that mere temporal proximity always and without exception strengthens certain moral duties, including the duty to save – call this view Robust Temporalism. Although almost all other moral philosophers dismiss Robust Temporalism out of hand, I argue that it is prima facie intuitively plausible, and that it is analogous to a view about special obligations that many philosophers already accept. I also defend Robust Temporalism against several common objections, and I highlight its relevance to a number of practical policy debates, including longtermism. My conclusion is that Robust Temporalism is a moral live option, that deserves to be taken much more seriously in the future.

Other working papers

Maximal cluelessness – Andreas Mogensen (Global Priorities Institute, Oxford University)

I argue that many of the priority rankings that have been proposed by effective altruists seem to be in tension with apparently reasonable assumptions about the rational pursuit of our aims in the face of uncertainty. The particular issue on which I focus arises from recognition of the overwhelming importance…

Moral uncertainty and public justification – Jacob Barrett (Global Priorities Institute, University of Oxford) and Andreas T Schmidt (University of Groningen)

Moral uncertainty and disagreement pervade our lives. Yet we still need to make decisions and act, both in individual and political contexts. So, what should we do? The moral uncertainty approach provides a theory of what individuals morally ought to do when they are uncertain about morality…

Longtermism in an Infinite World – Christian J. Tarsney (Population Wellbeing Initiative, University of Texas at Austin) and Hayden Wilkinson (Global Priorities Institute, University of Oxford)

The case for longtermism depends on the vast potential scale of the future. But that same vastness also threatens to undermine the case for longtermism: If the future contains infinite value, then many theories of value that support longtermism (e.g., risk-neutral total utilitarianism) seem to imply that no available action is better than any other. And some strategies for avoiding this conclusion (e.g., exponential time discounting) yield views that…