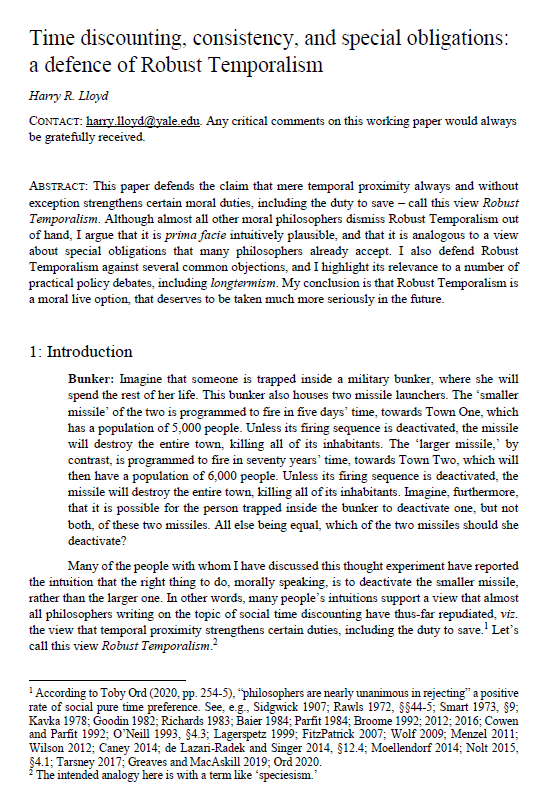

Time discounting, consistency and special obligations: a defence of Robust Temporalism

Harry R. Lloyd (Yale University)

GPI Working Paper No. 11-2021

This is the winning entry of the Essay Prize for global priorities research 2021. The uploaded paper is the full, revised draft of the abridged paper submitted for the prize competition.

This paper defends the claim that mere temporal proximity always and without exception strengthens certain moral duties, including the duty to save – call this view Robust Temporalism. Although almost all other moral philosophers dismiss Robust Temporalism out of hand, I argue that it is prima facie intuitively plausible, and that it is analogous to a view about special obligations that many philosophers already accept. I also defend Robust Temporalism against several common objections, and I highlight its relevance to a number of practical policy debates, including longtermism. My conclusion is that Robust Temporalism is a moral live option, that deserves to be taken much more seriously in the future.

Other working papers

Meaning, medicine and merit – Andreas Mogensen (Global Priorities Institute, Oxford University)

Given the inevitability of scarcity, should public institutions ration healthcare resources so as to prioritize those who contribute more to society? Intuitively, we may feel that this would be somehow inegalitarian. I argue that the egalitarian objection to prioritizing treatment on the basis of patients’ usefulness to others is best thought…

The weight of suffering – Andreas Mogensen (Global Priorities Institute, University of Oxford)

How should we weigh suffering against happiness? This paper highlights the existence of an argument from intuitively plausible axiological principles to the striking conclusion that in comparing different populations, there exists some depth of suffering that cannot be compensated for by any measure of well-being. In addition to a number of structural principles, the argument relies on two key premises. The first is the contrary of the so-called Reverse Repugnant Conclusion…

The case for strong longtermism – Hilary Greaves and William MacAskill (Global Priorities Institute, University of Oxford)

A striking fact about the history of civilisation is just how early we are in it. There are 5000 years of recorded history behind us, but how many years are still to come? If we merely last as long as the typical mammalian species…